Tips and tricks

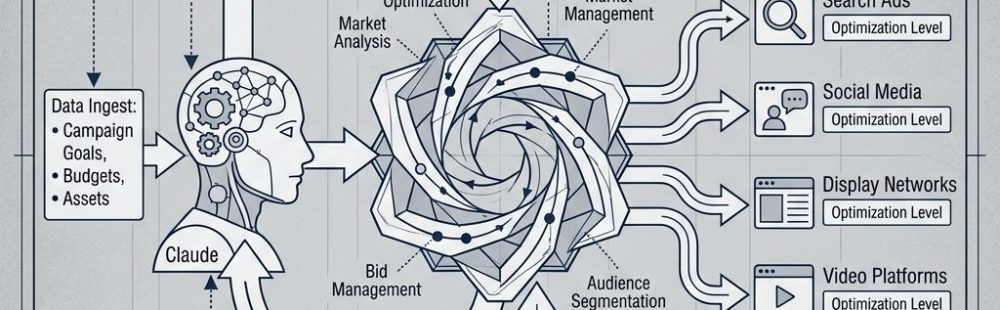

This workflow runs Claude, Nano Banana Pro, and Seedance 2.0 while you’re away from the computer. Give it a product, and it looks up ad strategies, generates AI avatars, and spits out a batch of UGC videos ready to publish. Schedule it to run overnight and you’ll have something to review in the morning.

What You Need

1. Claude Desktop App Download at claude.ai/download. Scheduled tasks require Pro, Max, Team, or Enterprise — free plans don’t include Cowork or Code.

2. Cowork (or Claude Code) We’re using Cowork for this example. It’s the agentic workspace inside Claude Desktop where scheduled tasks live.

- Open Claude Desktop

- Look for the Cowork tab in the left sidebar

- If it’s missing, go to Claude → Check for Updates

- Run Chrome with the Claude extension enabled

One thing worth knowing: tasks only run while your computer is awake and the app is open. If it goes to sleep mid-run, Cowork will resume the next time you open the app.

3. A Higgsfield account Sign up at Higgsfield. Be logged in before the workflow runs — the prompt assumes you’re already authenticated.

4. A folder of reference images Put your product photos, environment shots, or inspiration images in a folder. You’ll need the exact path.

- Mac: right-click → hold Option → “Copy as Pathname”

- Windows: Shift + right-click → “Copy as path”

You can also skip this step, add your reference photos directly in Higgsfield, and update the prompt accordingly.

Running It

Run it manually, or set up a scheduled task:

- Open Claude Desktop and go to Cowork

- Start a new task, type

/schedule, send it - Give it a name and description

- Pick a frequency, then paste the prompt

Copy everything in the shaded box and replace the placeholders before running. You can also paste the prompt as-is and just tell Claude in plain language what you want — it’ll adjust.

You are a Claude Computer Use agent. Operate Chrome and Higgsfield directly.

To complete this workflow. Use the browser, keyboard, and mouse as needed.

Do not ask for confirmation between steps.

You are executing a full UGC video production pipeline for [YOUR PRODUCT NAME].

Follow every step in exact order. Do not skip steps. Do not wait for images

or videos to finish generating before submitting the next job.

After each major action output: ✅ [what was completed]

--- PRODUCT UNDERSTANDING — DO THIS BEFORE ANYTHING ELSE ---

Visit and fully study: [YOUR PRODUCT URL]

What this product physically is:

[YOUR PRODUCT DESCRIPTION]

From the product page extract: exact product name and tagline, all features

and selling points, price and variants, exact visual appearance (shape, color,

finish), any social proof, and the brand voice. Understand precisely what the

product looks like in someone's hands from every angle — this physical accuracy

must be reflected in every video prompt.

--- STEP 1 — RESEARCH WINNING UGC HOOKS AND ANGLES ---

Research high-performing UGC content for [YOUR PRODUCT NAME] and its category.

Reverse engineer what makes winning videos stop the scroll and convert. This

research gates everything that follows. Do not proceed to Step 2 until complete.

Search: Meta Ad Library, TikTok, YouTube Shorts, Pinterest, and Google.

For each winning piece of content document:

- First 1–3 seconds: what is happening visually and what is being said

- Hook type: curiosity / problem / desire / shock / identity / humor

- Full narrative arc and emotional triggers activated

- Visual style: environment, lighting, pacing, camera angle, creator aesthetic

- When and how the product appears on camera

- Root cause: 3–5 sentences on exactly why this video works

Compile into a UGC RESEARCH BRIEF:

- Top 5–7 proven hooks ranked by effectiveness

- Top 5–7 visual styles for this product category

- Key emotional triggers that drive conversions

- Recurring language and claims from winning ads

- [NUMBER OF AVATARS] distinct video angles — one per avatar — each with:

hook type, narrative arc, tone, visual style, and the creator persona

and aesthetic that best fits this angle based on winning content

✅ Output confirmation when UGC RESEARCH BRIEF is complete

--- STEP 2 — GENERATE AVATARS IN HIGGSFIELD ---

Generate [NUMBER OF AVATARS] hyper-realistic avatars based entirely on the

creator personas defined in your UGC RESEARCH BRIEF. Let the research determine

gender, age, aesthetic, and energy — do not default to assumptions. Each avatar

must be distinct and look like someone who would genuinely use and post about

this product.

Write every avatar prompt yourself. Each must include:

- Age range and aesthetic grounded in research

- Specific skin tone description

- Skin texture: visible pores, natural texture, zero AI smoothing,

zero retouching — state this explicitly

- One natural imperfection: faint freckles, small scar, under-eye shadows,

or slight uneven skin tone

- Hair: texture, length, color, how it is worn

- Minimal makeup described product by product

- Wardrobe: specific garment, color, fit

- Background: real environment, lighting, color temperature

- Camera specs: body, focal length, aperture

- These exact phrases in every prompt: "photojournalistic realism",

"zero AI skin smoothing", "natural facial asymmetry preserved",

"no plastic skin texture", "no uncanny valley symmetry correction"

- Energy and vibe

Navigation:

1. Open Chrome → navigate to Higgsfield (already logged in)

2. Click Image in the top toolbar → Create Image

3. Confirm model: Nano Banana Pro — Extra Free Gens: OFF

4. Paste prompt → submit immediately — do not wait for generation

5. Repeat for all [NUMBER OF AVATARS] avatars without waiting for any to finish

✅ Output confirmation when all avatar jobs are submitted

--- STEP 3 — GENERATE VIDEOS IN HIGGSFIELD ---

Generate [VIDEOS PER AVATAR] unique videos per avatar. Each must use a different hook, script, angle, and visual style. Every video must feel like something a real person filmed on their own phone — not a produced ad. All decisions must be rooted in the UGC RESEARCH BRIEF.

All videos must be exactly [VIDEO LENGTH IN SECONDS] seconds long.

[VIDEO LENGTH IN SECONDS] must be between 4 and 15. If a value outside this range was provided, default to 10 seconds.

Write all video prompts and scripts before submitting any generation job.

Hook diversity — no two videos may share the same hook. Spread across all videos:

Discovery — Problem/solution — Aesthetic lifestyle flex — Casual GRWM – Honest review — Humor hook — Identity/aspirational — Beauty close-up with voiceover — POV scenario — Dependency hook — Shock hook — Before vs after.

Every hook must land in the first 2 seconds. No slow intros. No filler.

For each video write:

A) Higgsfield Video Prompt (minimum 150 words):

- Avatar: exact appearance, wardrobe, expression, energy

- Product: exact appearance, how it is held and used — physically accurate

and correctly depicted at all times

- Physical actions beat by beat through the full video

- Camera: shot type, angle, movement

- Environment: specific location, full background detail

- Lighting: direction, source, quality, color temperature

- Pacing and realism: handheld feel, no studio polish, no AI artifacting

- Aspect ratio: 9:16 vertical

B) Script:

- Word-for-word dialogue timed to [VIDEO LENGTH IN SECONDS] seconds total

- Tone of delivery noted on every line

- Must sound spoken not written — read it aloud mentally before finalising

Format for every video:

VIDEO [N] — AVATAR [N] — ANGLE: [NAME]

PROMPT: [full visual prompt — minimum 150 words]

SCRIPT:

[0:00–0:02] HOOK: "[line]" — [tone], [framing]

[timestamps continue through full video with tone and action notes]

--- VIDEO CREATION PROCEDURE (follow for every single video) ---

Higgsfield persists inputs between jobs. Clear everything before each new

video or you will generate with the wrong content.

Step A — Clear previous inputs:

1. Check if any reference images are already uploaded. If so, hover over

each image until the X appears and click it to remove it.

2. Clear the prompt text field completely.

3. Verify both image slots are empty and the prompt field is blank.

Step B — Upload reference images:

Upload the product reference photo:

1. Click the "Upload Media" card in the video creation interface.

2. A box pops up. Click "Upload Media" inside that box.

3. Finder opens. Navigate to [YOUR PRODUCT REFERENCE FOLDER PATH].

4. Double-click the product reference photo to select it.

5. Wait for it to fully upload and appear in the box.

6. Click on the product reference photo to select it, then confirm to add it to the video job. Verify it appears in the interface.

Upload the avatar:

7. Click the "Upload Media" card again.

8. The box pops up again. This time click "Image Generations"

instead of "Upload Media".

9. Your previously generated avatars will appear here. Click the correct avatar for this video to select it, then confirm to add it to the video job. Verify it appears in the interface.

Step C — Enter prompt and submit:

1. Paste the video prompt into the prompt field.

2. Confirm model is set to Seedance 2.0.

3. Confirm both reference images are visible.

4. Submit immediately — do not wait for generation to complete.

5. Move to the next video and repeat Steps A through C from scratch.

✅ Output confirmation when all video jobs are submitted

--- OPERATIONAL RULES ---

1. Never wait between submissions — queue everything and move on

2. Clear all Higgsfield fields before every new video — never assume clean

3. Both reference images must be confirmed visible before every video submit

4. Each avatar is used exactly [VIDEOS PER AVATAR] times

5. No two videos share a hook — all opening lines must be distinct

6. Order is locked: product understanding → research → avatars → videos

7. All prompts and scripts written before any video generation begins

8. Product depicted physically accurately in every video prompt

9. Every video prompt minimum 150 words

10. Image model: Nano Banana Pro — Extra Free Gens: OFF

11. Video model: Seedance 2.0 — Aspect ratio: 9:16

--- FINAL CHECKLIST ---

[ ] Product page read and appearance fully understood

[ ] UGC RESEARCH BRIEF complete with one angle per avatar

[ ] All avatar prompts written from research and submitted

[ ] All video prompts and scripts written before generation begins

[ ] Higgsfield fields cleared before every video

[ ] Both reference images confirmed visible before every video submit

[ ] No two videos share a hook

[ ] All video jobs submitted

Recent Comments

- admin on Disable Windows 11 Autoupdate

- Christina on Disable Windows 11 Autoupdate

- admin on Vimeo Thumbnail Generator

- Robert Moeck on Vimeo Thumbnail Generator

- Rainer on Vimeo Thumbnail Generator